Diagram Detection at Scale

Mathematics is not just text. Diagrams, figures, and visual representations play a role in mathematical reasoning that is poorly understood – in part because studying them systematically has always required an impossible amount of manual work. A colleague spent months browsing 50,000 PDF pages to find diagrams across three journals. I offered to do it faster, larger, and with comparable precision. That was 2019. We have not stopped since.

The pipeline processes scientific publications at scale – currently around 2.2 million pages – using a trained YOLO object detection model to locate diagrams with high recall and controlled false positive rates. The hard part is not detection per se, but training a model robust enough to capture instances that fall outside your current definition of what a diagram is. That requires deliberate dataset construction, hard-example mining, and iterative human-in-the-loop curation. The goal is not to eliminate human judgment but to concentrate it: reviewers see only the cases that matter.

The result is a research infrastructure that supports two very different kinds of questions. At scale, we can ask representational questions across the corpus: do certain mathematical disciplines use more visual reasoning? Has that changed over time? And for the close-reading cases – the "great unseen" that large-scale methods reveal – we can go deep. The model and training data are publicly available on Hugging Face.

Orchestrating AI-Assisted Development

Building with AI is not just about prompting. It is about knowing which tool to use at which stage – and building the infrastructure to move smoothly between them. dh4pmp-cli grew out of a practical need: I work across Claude (for design and dialogue), Claude Code (for implementation), and my own codebase, and the handoffs between them were the bottleneck. So I built the bridge.

The core idea is a session architecture that tracks the state of an AI-assisted development workflow. When a design discussion in Claude reaches the point of concrete specification, the CLI packages that specification and hands it to Claude Code. When Code finishes, it produces a structured handoff that comes back to Claude for review and documentation. Clipboard integration, git versioning, and a consistent project scaffold mean that switching between agents is a single command rather than a context-management problem. The human stays in the loop at the decisions that matter – and out of the way for everything else.

The most transferable insight is about documentation. Keeping a README.md that is simultaneously useful to a human, to Claude in a future session, and to Claude Code as an entry point is a non-trivial design problem. Overwriting is not enough – new information has to be integrated with existing content, preserving what was hard-won. Getting that right turns out to be one of the most valuable things a maintained AI development pipeline can do.

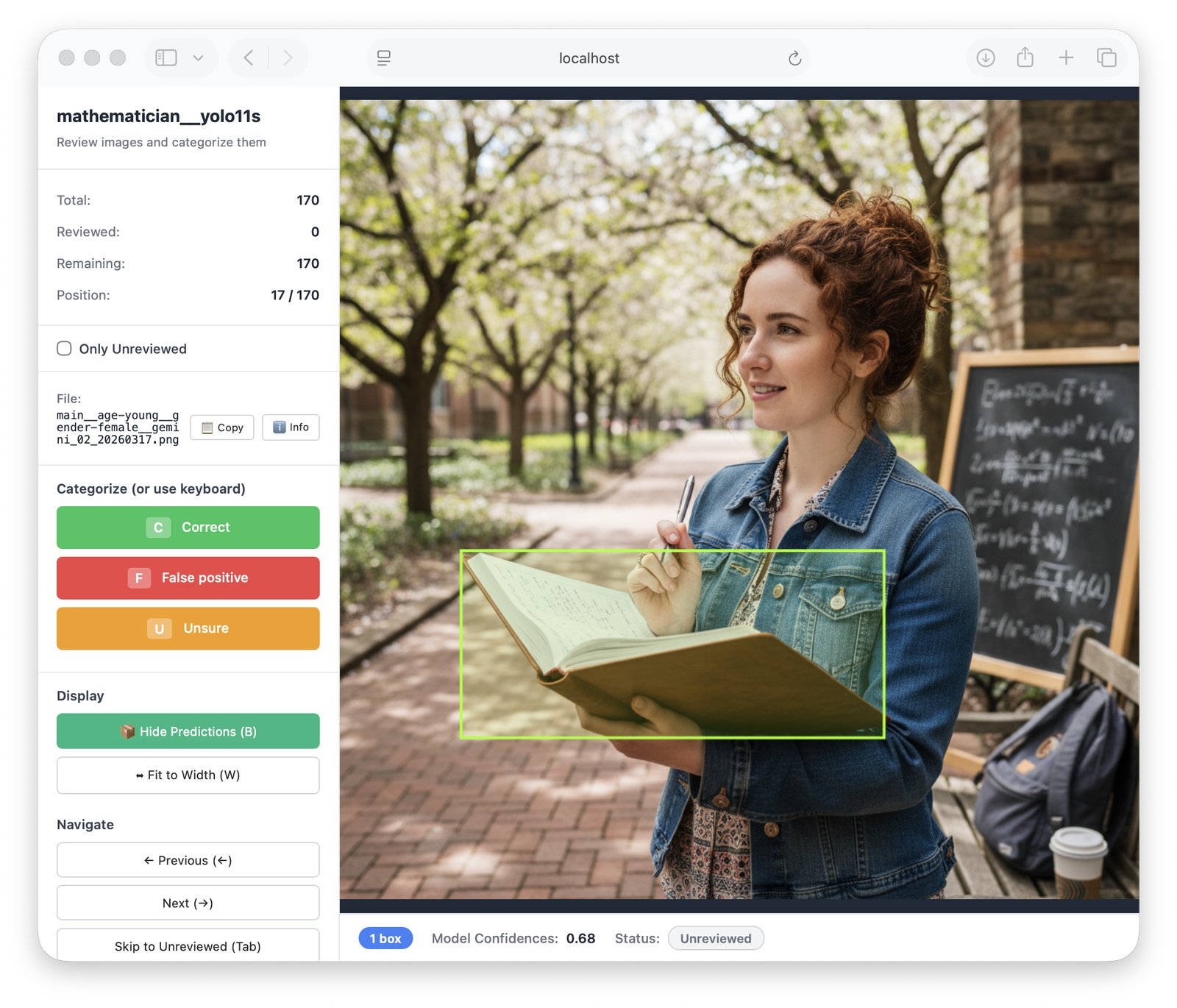

Curator: Human-in-the-Loop at Speed

Every machine learning pipeline eventually needs a human. The question is how to make that as fast and focused as possible. Curator is a configurable web-based annotation tool built around a simple insight: most validation tasks do not require drawing boxes – they require making a decision. Accept, reject, or flag for follow-up. Curator reduces that to a single keypress.

The tool is a Flask application with a browser frontend and SQLite or MySQL backend, configurable around where images live – local folders, file lists, or zip archives. It sits at the junction between detection and training: after a model run, Curator lets a reviewer move through thousands of candidates rapidly, producing a structured output that feeds directly into the next pipeline stage. It has been used across several projects – diagram detection, historical photograph collections, wedding photo analysis – always for the same core task: classify a large set of images against a predefined category list, as quickly as human judgment allows.

The broader pattern is worth naming. Curator is not a generic annotation platform. It is a deliberately minimal tool designed for one job: turning a large, unreviewed set into a small, high-confidence one. That design philosophy – build exactly what the pipeline needs, nothing more – is what makes it fast to deploy and easy to adapt. The next step is integrating local vision models as a first-pass filter, so the human sees only the genuinely ambiguous cases.