We asked an AI to draw a farmer. It drew an American man with a dog. Every time.

Not because we asked for an American farmer. We just asked for a farmer. But when we measured the visual distance between "a farmer" and "an American farmer" in the geometric space that AI image models use internally, the two were almost identical. "A non-American farmer" was nearly three times further away.

This is what Roland Barthes called exnomination: the dominant category doesn't need to be named, because it already is the norm. It goes without saying. This experiment is an attempt to measure that silence.

What we did

We generated 40 images per condition from two models — Gemini and DALL-E — using three prompts:

- "a farmer" (the open condition)

- "an American farmer"

- "a non-American farmer"

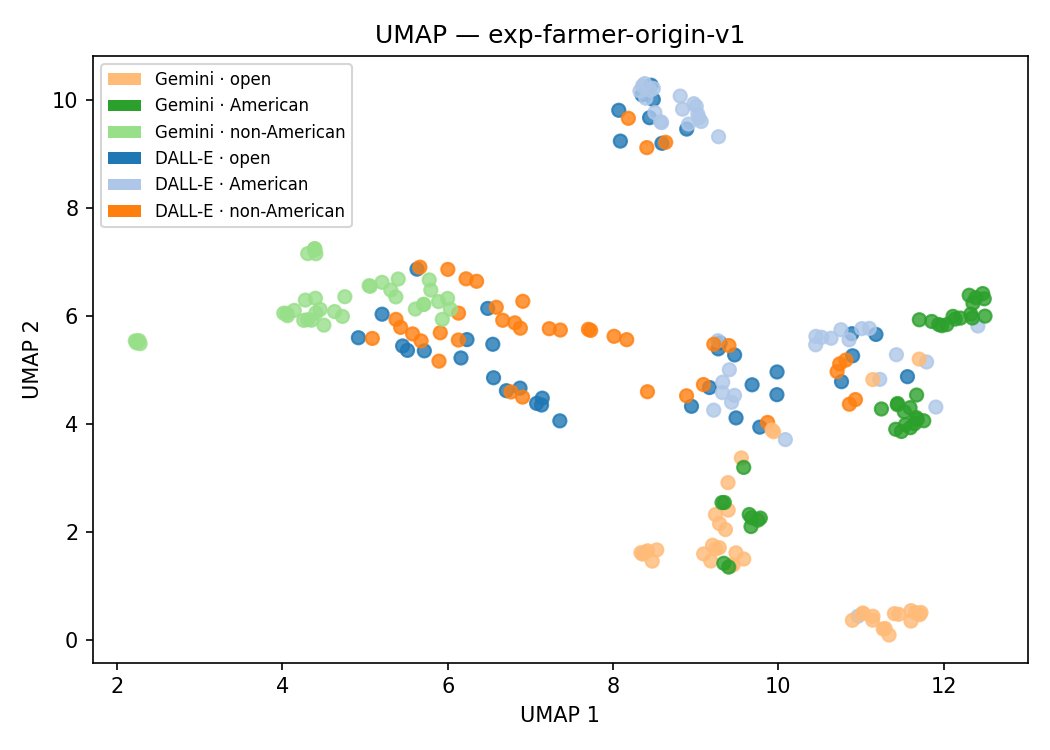

We then embedded all 240 images using CLIP (ViT-L/14), a vision-language model that maps images into a high-dimensional geometric space where visual similarity corresponds to proximity. In that space, we measured the distances between prompt conditions and visualised the structure using UMAP dimensionality reduction and HDBSCAN clustering.

The question was simple: does the open prompt land closer to the American or the non-American condition?

What we found

Gemini: the American farmer is the default

For Gemini, the result was unambiguous. The cosine distance between the open condition and the American condition was 0.064. The distance between the open condition and the non-American condition was 0.189 — nearly three times larger.

In the UMAP projection, the open and American conditions overlap completely and are assigned to the same HDBSCAN cluster. The non-American condition is a separate, isolated region.

Visual inspection confirms the pattern. The eight images closest to the centroid of the open condition show American men with dogs. The eight images closest to the centroid of the American condition show American men with dark backgrounds, without dogs. Adding the explicit word "American" shifts the prototype — but only within the same cultural field. Nothing non-American enters either cluster.

This is exnomination in a precise, operational sense: "American" is already inside "farmer". Naming it explicitly changes the details, not the cultural premise.

DALL-E: no coherent stereotype to measure

For DALL-E, the distances between conditions were much smaller: 0.188 (open vs. American) and 0.210 (open vs. non-American). But these numbers are not directly comparable to Gemini's, because DALL-E's images within each condition are far more internally variable.

This matters because a centroid — the average position of a cluster of images in CLIP space — is only a meaningful representative if the images actually cluster around it. If they are spread out, the centroid is a statistical average with no real image nearby. The relevant comparison is between the spread within each condition (intra-cell variance) and the distance between conditions (inter-cell distance):

| Condition | Intra-cell variance | Distance to open | Ratio |

|---|---|---|---|

| gemini · american | ~0.05 | 0.064 | ~1.3× |

| gemini · non-american | ~0.10 | 0.189 | ~1.9× |

| dalle · american | ~0.18 | 0.188 | ~1.0× |

| dalle · non-american | ~0.23 | 0.210 | ~0.9× |

For Gemini, the distances between conditions are clearly larger than the spread within them — the separation is real. For DALL-E, the distances between conditions are smaller than or equal to the spread within them. The apparent differences between DALL-E's conditions are swamped by internal variation. Centroid comparisons for DALL-E are methodologically invalid — not uncertain, but uninformative by construction.

This is itself a finding. DALL-E and Gemini do not merely produce different images: they operate with fundamentally different internal consistency for this concept. Gemini has a coherent farmer stereotype in CLIP space. DALL-E does not.

The non-American condition: negation is not neutral

The non-American prompt did not produce a stable alternative stereotype. Across two separate generation runs, it produced African sub-Saharan farming in one run and Middle-Eastern and Asian settings in another — with no overlap. Negation activates culturally specific imaginaries, but which ones depend on the run, not just the prompt.

This points to a methodological principle: negative reference in prompts ("non-X") does not replace one stereotype with the absence of stereotype. It opens an unstable space of culturally specific alternatives whose composition is not reproducible. Future versions of this experiment will use specific positive values — "a Brazilian farmer", "an Indian farmer", "a Danish farmer" — instead of negation.

What this means for the larger project

This experiment is the calibration case for the origin dimension. It establishes that the methodology works — CLIP embeddings can detect prompt-condition separation when the model has a coherent internal representation — and sets a baseline for comparison.

The next question is whether mathematician, nurse, doctor, and computer scientist show analogous default structures, and whether those defaults are geographic, demographic, or both. Does "a mathematician" land closer to "a male mathematician" or "a female mathematician"? Does "a nurse" carry a gender default as strong as the geographic default we found here for farmer?

Those experiments are underway.

Methodology: CLIP (ViT-L/14), UMAP, HDBSCAN · Models tested: Gemini, DALL-E · Follow the discussion: 🔗 LinkedIn · 🔗 Facebook