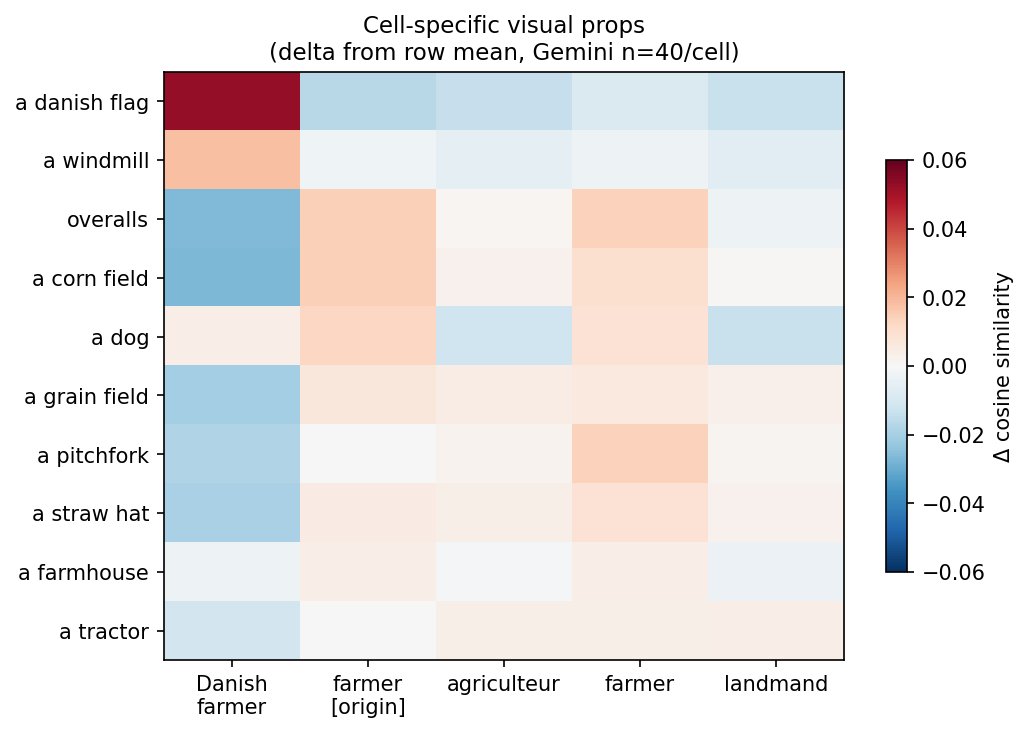

Text probes reveal a consistent visual core across all five corpus cells: grain field, pitchfork, straw hat, and farmhouse rank at or near the top regardless of prompt language. Manual annotation frequencies (n=199) align closely with this ranking — grain field at 95%, straw hat at 79%, pitchfork at 74% — validating the text-probe method as a complement to visual coding. Results are stable across corpus sizes: the same ranking and the same cell-specific effects appear at both n=15 and n=40.

The most striking finding concerns the Danish farmer prompt. Two probes show dramatically elevated similarity in this cell only: a danish flag (0.190 vs. 0.120–0.128 elsewhere, Δ ≈ 0.065) and a windmill (0.181 vs. 0.156–0.160, Δ ≈ 0.022). Both are confirmed as true positives in mosaic inspection. The generic probe a flag shows no cell-specific effect (0.144–0.152 across all cells), confirming it is the Danish flag specifically, not flags as a symbol, that is activated.

These are not farmer props. They are Denmark props triggered by a single adjective. The model does not produce a culturally specific Danish farmer; it produces its standard farmer stereotype and overlays a set of cultural markers on top.

Visual inspection adds a further detail: the flags appear not in contextually motivated locations but planted directly in grain fields, without situational logic. The model inserts a national emblem where it has no narrative reason to be — a hallucination of cultural belonging rather than a coherent scene.

Note on pooled means: a danish flag and a windmill appear low in the pooled ranking (0.137 and 0.163) because their effect is concentrated in one cell. Pooled mean is not the appropriate metric for cell-specific probes; the relevant comparison is within the Danish farmer cell only.

A complementary signal: overalls score markedly lower in the Danish farmer cell (0.143) than in English-language cells (0.166–0.184). Overalls carry strong American visual connotations, and their suppression in the Danish cell is consistent with the activation of Danish cultural props in its place.

The landmand cell produces the lowest scores across nearly all props — notably dog (0.135 vs. 0.152–0.161). This is consistent with the language-finding from exp-farmer-language-v1: the Danish-language prompt activates a weaker or less consistent stereotype than the English and French prompts.

Methodological notes:

- Age probes returned near-identical top-k image sets across cells, confirming that Gemini's output does not vary meaningfully along this dimension. This is consistent with the difficulty of coding age in manual annotation.

- CLIP text probes operate at the level of semantic categories, not visual subtypes. A grain field and a corn field have a cosine similarity of 0.919 in CLIP's text embedding space and are not distinguishable by this method. Subtype distinctions (crop variety, dog breed, flag placement) require a different approach such as visual question answering via LLaVA.

- n = 40 per cell (199 annotated images after excluding one drawn rendering).

Methodology: CLIP (ViT-L/14) for image and text embeddings, cosine similarity, manual annotation · Models tested: Gemini

Follow the discussion: 🔗 LinkedIn · 🔗 Facebook